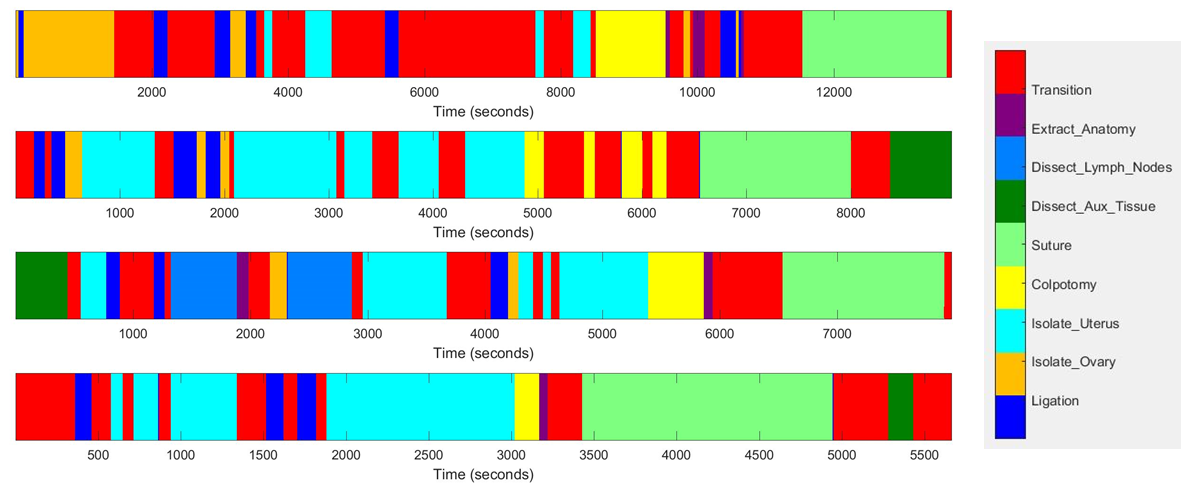

Sample phase segmentation of hysterectomy by a human annotator

Aims: Develop methods that can automate segmentation of procedure into phases and assessment of technical skill

Description: This project aims to develop methods that can automatically 1) segment the surgical procedure into constituent steps (phases), and 2) assess the surgical skill within each step. We have collected a dataset of 30 robot-assisted laparoscopic hysterectomy procedures performed using the da Vinci system. This contains endoscopic stereo video, instrument and console motion, and user events on the system.

Funding: Link Foundation Fellowship, NSF NRI Award 1227277

People: Anand Malpani, S. Swaroop Vedula, Chi Chiung Grace Chen, Gregory D. Hager

Publications:

- Berges AJ, Vedula SS, Chen CCG, Malpani A. Is There a Relationship Between Warm-Up Virtual Reality Simulation and Trainee Robot-Assisted Laparoscopic Hysterectomy Performance? Journal of Minimally Invasive Gynecology. 2018 Nov 1;25(7):S92. (under preparation)

- Malpani A, Martinez N, Vedula SS, Hager GD, Chen CCG. Automated Skill Classification using Time and Motion Efficiency Metrics in Vaginal Cuff Closure. Society of Gynecologic Surgeons, 44th Annual Scientific Meeting. Orlando, FL; 2018. (under revision)

- Malpani A, Arora S, Vedula SS, Chen CCG, Hager GD. Crowdsourcing surgical activity summaries for phase recognition. LABELS: International Workshop Large-Scale Annotation of Biomedical Data and Expert Labels Synthesis. Granada, Spain; 2018.

- Malpani A, Lea C, Chen CCG, Hager GD. System events: readily accessible features for surgical phase detection. Int J CARS. 2016 May 13;11(6):1201–1209.

- Chen CCG, Tanner E, Malpani A, Vedula SS, Fader AN, Scheib SA, Green IC, Hager GD. Warm-up before robotic hysterectomy does not improve trainee operative performance: a randomized trial. J Minim Invasive Gynecol. 2015 Nov;22(6, Supplement):S34.